how I got whitepilled on morality

there are natural, impersonal, normative facts, and we have fallible but non-useless intuitions about them

There’s been some chatter about moral realism recently, pro and con:

or here: https://substack.com/home/post/p-145693160 (substack is being janky about rendering this as either a block or hyperlink, sorry!)

Where I’m coming from

Shortly after trying to think seriously about morality (middle school), I adopted hedonic utilitarianism. It seemed to explain all non-bullshit moral judgments or at least to make them plausible using only a few principles, or maybe even just one, which made it theoretically superior to the main theory I was exposed to up to that, which is just that there were a bunch of things that were good or bad without any particular reason for being so.

By shortly after undergrad, though, it seemed to me that a noncognitivist/expressivist/subjectivist viewpoint better captured the truth. (I believe something in this sphere expresses Lance’s beliefs.) Utilitarianism only required that you stipulate that pleasure is good and pain is bad to explain all moral judgments, but that’s arguably two too many. If what people were doing was expressing their opinions and preferences, then I could banish those two last stipulations as well, and rely only on purely materialist premises. Further, normative ethics no longer had any kind of parsimony constraint because their reference was in complex psychology rather than elegant metaphysics.

Thesis, antithesis, meet synthesis: I now think you need no additional stipulations or axioms to get to moral realism, and the correct normative ethics is preference utilitarianism (so yes utilitarianism but yes this is all downstream of preferences.)

What follows is my best (i.e., probably still clumsy) account of why.

relevant natural facts

Suppose there’s a ball of mass m=10 stone traveling at velocity v=5 knots. Then for functions

it’s a fact that f=11 stone, g=125 stone-knots-squared, and h=84.85ish root-stone-knots. Moreover these are natural facts, grounded in the physical ball weighing some amount (10 stone) and travelling some other (5 knots).1

Which of these are relevant to us depends on our purposes. For instance if a company is charging me for things I send by weight and I want to send this ball and some other object weighing one stone, f(m) may be quite relevant. But g(m,v ) turns out to be relevant across a wide variety of contexts - even though humans a few centuries ago didn’t have such a concept, I expect that any sufficiently advanced aliens likely would; it’s the formula for kinetic energy.

When we say something “is a thing,” or “carves reality at its joints,” I think part of what we’re doing is discussing the extent to which it’s - in addition to being true - relevant in this way. Of course, relevancy (as opposed to truth) is not objective, but it can be convergent.

Things like the formula for kinetic energy form much of our model for what really, scientifically real truths look like.2 Most knowledge we can (and must) operate on is more fuzzy, and less certain, than this, though like relevancy these are matters of degree. Indeed, some of the non-kinetic forms energy can take are a bit messier, though they still form part of the overall tapestry of nature and, from an ontological point of view, kinetic energy is just one surface manifestation of this broader thing.

As it happens, I think Kant came pretty close to specifying a scientitfically useful and practically relevant schema for natural facts (as T = 1/2 mv^2 is a schema for natural facts.) To be clear, a more colloquial version of this formula had been crafted (convgently!) by various thinkers of the Axial Age under the rubric of the Golden Rule, I think there’s still more work to formulate it better, and Kant’s own ideas about how to apply the schema (it’s immoral to masturbate but not to rat out your Jewish neighbors to the Nazis) range from eyeroll-inducing to atrocious.3 Here I want to say that the formula

whether the world w(X) pareto dominates w(Y), from the perspective of everyone involved, where w(φ) is the world resulting from agents acting on the basis of decision rule φ

is a natural4 fact which is highly relevant to many contexts, most importantly to politics and other forms of collective deliberation. Notably this is a partial ordering relation over decision rules in the context of some situation; it is not necessarily a well-ordering over such. In fact, it superficially seems to apply in very few situations, although I’ll argue that when you plug it into itself, it becomes much more general - in fact, proscriptive of something like preference utilitarianism.

To give an account of why this is so, I’ll have to lay some more groundwork.

from nihilism to egoism

A lot of the arguments against moral realism rely on Hume’s guillotine - that one cannot derive an is from an ought. I think this guillotine just straightforwardly blunted by the case of pragmatic reasons and/or hypothetical imperatives:

If you want to eat something tasty, the fact that Doritos would be tasty to you is a reason to eat them.

If you want to not die, the fact that Drain-O is toxic is a reason not to drink it.

If you want (due to complicated moral reasoning, uncomplicated moral reasoning, or just sentiment) kids not to die of preventable diseases, the fact that donating to the Malaria Consortium efficiently reduces malaria deaths is a reason to donate to it.

These aren’t stance-independent or impersonal reasons - they’re reasons for particular agents with particular preferences or desires. Still, they show that normativity can be derived from natural facts alone in a straightforward way.

Of course, perhaps I just have lower standards than Hume, who didn’t think you could make claims about causality either. Or perhaps you don’t like using language of normativity for inferences from these preference-counterfactual pairs - if so, adjust the language from here on out to be about “schnormativity,” or whatever, although these really seem to me to be prototypical cases of having a reason to do a thing.

from two-boxing to one-boxing

Let’s suppose you’re contemplating these preference-given reasons for things, and, for convenience, start talking about what you prefer on net in terms of expected utility and temporal discounting and that type of thing. To be more specific, let’s assume that:

There’s some action you could perform - let’s say building a shelter on your deserted island - that would reduce your utility by -30 today and then by +10 every other day into the future.

You have a hyperbolic discounting rate - valuing tomorrow at 50% of today and each day thereafter at 80% of the previous day.

You expect to live for another twenty days at any given point (suppose there’s a 1/20 chance of a tsunami, or whatever.)

In this case, if my spreadsheet is right, you have a cumulative expected reward of 9.6 if you build tomorrow, 4.6 if you build today, and 0 if you build never. So clearly, if you operate by the maxim “always act to maximize your current expected reward” like a naive agent, you should build tomorrow.

But that isn’t an option - if you operate by that principle, you’ll reason the same way tomorrow, and never build the shelter, which actually means an expected reward of 0, which is less than 4.6.

By contrast, if you operate according to “act as if you had time-consistent preferences, (say, each day is worth 80% of the previous day)” then you will build the shelter today. This (4.6) isn’t as good as building the shelter tomorrow (9.6), but it is better than the actual alternative of building the shelter never (0).5

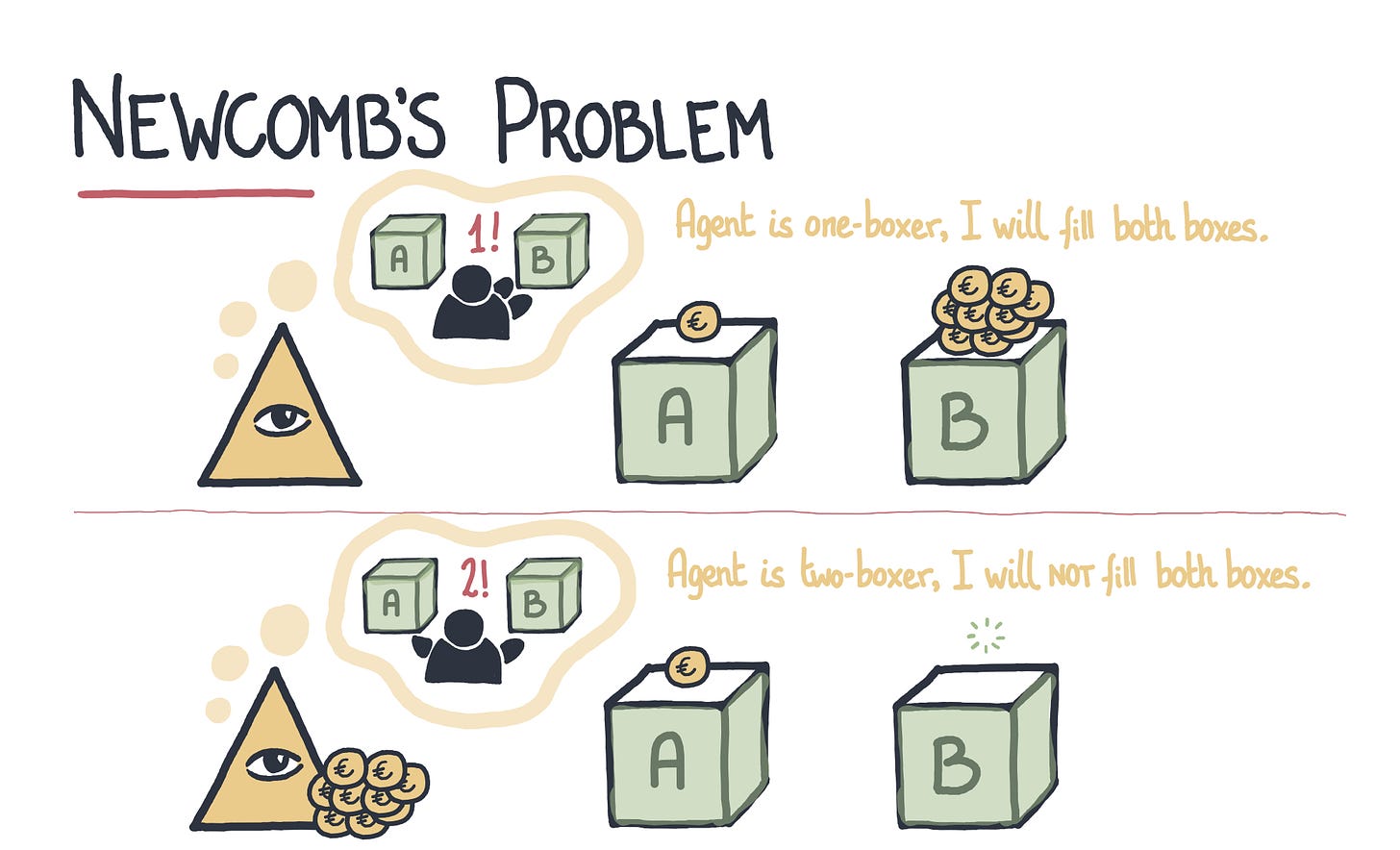

The same dynamic shows up in a more straightforward if less realistic form in Newcomb’s Problem. God offers you two boxes and you can take any combination of them you like, and A has $1,000 in it; but with His middle knowledge of what you will do, He puts $100,000 in B iff you would only take B. The correct6 answer is to take B only.

Parfit’s hitchhiker is another such scenario.

In general, agents have reasons to transform themselves into agents that avoid Dutch booking, akrasia, etc. We might even say, without stepping yet outside the bounds of ethical egoism, that there’s a categorical imperative (at least, reason) to become more rational in this sense, for any agents with any baseline preferences at all.

from one-boxing to anthropic practical reasoning

The hitchhiker, Newcomb, and akrasia scenarios only include you. But we can extend a lot of the same principles to scenarios where there are many agents only imperfectly like you - again, we are not going beyond ethical egoism as yet.

Suppose the Joker kidnaps you and 50 other people (but you can’t coordinate with them,) sequestering you in a cell in the Jokernaut. He’s going to offer each of you a choice between a slap in the face and non-poisonous cake - an otherwise easy choice, but then, he’s going to torture and kill everyone who picks the majority option.

You could think yourself in circles trying to pick a determinately best answer: “well, if it made no difference, I guess it’s better to have the cake; but if most people think that way, then I’m better off choosing the slap; but if most people think that way…”

Note to even get that far - and not just immediately choose the cake because you have no idea what people would otherwise choose - you’re drawing an analogy between your own reasoning processes and everyone else’s. But once you do so, there is a best answer, which is “flip a coin.” Any reasoning process that picks a particular response is, if that reasoning is widespread, going to get you tortured and killed.

Flipping a coin doesn’t give you encouraging odds, but it’s better than any other, unless you think you’re soooooo different from everybody else.

from anthropic practical reasoning to acausal coordination

But wait! Maybe there’s a better option!

At 9:00 PM, the Joker is going to take a break to concentrate on his second-favorite activity, using the Notes feature on Substack. If some unknown % of you rattle your cages really hard, the ill-build Jokernaut will collapse, allowing all of you to escape. However, if only a minority rattle the cages really hard, he’ll have all the participants in the attempted Jokerbreak immediately tortured to death (those keeping still will still get their chance.)

Now , without anthropic or altruistic considerations, participating in this seems like a bad idea: there’s a 1/101 chance your participation makes the crucial difference, and otherwise it looks strictly worse to participate. But with anthropic considerations (and as yet no sympathetic concern, or moral concern outside of what we’ve built up here, for the other victims) 7it is at least plausible that the right move is to participate in the jailbreak. It might depend on how similar you think the others are to your reasoning, or the exact odds.

Nevertheless I believe we are at a form of moral realism here: we have pretty general-purpose reasons to bias yourself in favor of cooperation, and these reasons would apply to aliens, including aliens who did not know about them.

from similarity-weighted to general cooperation

I said above that the math might be different depending on just how similar the other parties were. For example, the math is very, very easy if everyone is a perfect atomic copy of you: as in the earlier cases, they’re guaranteed to act identically.

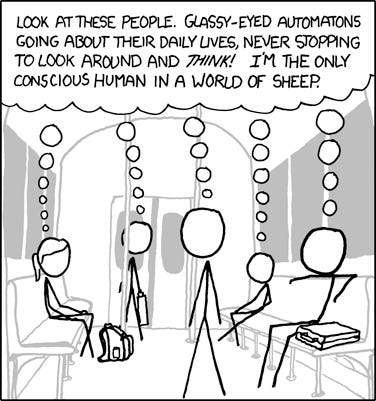

What about people of different personality, politics, demographic background than you - people for who the reasoning process is likely more different than yours than similar people are? Is this a license to go with the people who say to say “morality is for the ingroup?” To just drop all the preceding bullshit and go back to naive egoism if you’re sufficiently unique?

On a first pass, maybe - and to at least some extent personality and politics are anterior to the kinds of decisions people are making. But note that a meta application of the cooperation bias principle means you should be really hesitant about the kinds of reasoning (which, historically/psychologically, we see people repeating all the time) that says: we are the only cooperators, most people other than me are idiot NPCs, blah blah blah. If you’re on this site you’re exposed to a lot of not-especially-impressive people who believe they’re Zarathustra.

Now, I don’t want to say that nobody is a special snowflake. The Joker sure seems like a special snowflake in a world of us NPCs; posts by others have mentioned Jeffrey Dahmer. But even the Joker can reason, “well, I want to kidnap people and subject them to convoluted thought experiments, but other people also want to subject me to terrible punitive conditions because I’m both dangerous to them and kinda weird… maybe if I gave 10% more consideration to them, in the ways that are easiest for me, they would…”8

Even if this only impacts psychological motivations by 0.00005%, it does so along a gradient people can track and talk and reason about. That becomes important when we go…

from acausal to causal cooperation (politics)

What if people in the Jokernaut can talk to each other? Then I think, at least if we’re talking about humans, the probability that they’ll escape approaches 1. Why?

First, there’s a reputational economy established. Everybody wants to signal that they’re a good cooperator, which raises the estimate that the plan will work, in which case the selfish concern is avoid the shame of shirking participation and gain the glory of participating in the Jokerbreak.

Second, there’s an understanding of others as really in the same situation as themselves, and as other people in general with hopes and dreams and blah blah blah. This increases the expected acausal weight of your decisions (yes, you really are reasoning similar to them) and your sympathetic concern for them.

These two factors reinforce each other: the more you think of people as people, the more (cet par) you care about your standing in their eyes; and the more you virtue signal concern for some end, the more (cet par) you’re likely to adopt it as an actual end.

From rationally discoverability to imperfect truth-tracking intuitions

I think the account above gives us an answer to the question “how can atheists believe we just happened to evolve to know cosmic truths about the nature of morality?” The answer has one component related to the nature of naturalist moral realism itself, and another related to the particular normative ethics that arises in this account (preference rule utilitarianism.)

The answer that flows from naturalist moral realism is just that natural facts are very often discoverable facts. Now, of course, not all natural facts are discoverable facts; quantum mechanics places some pretty strict limits on some things we can know; general relativity on what we can outside our light cone; Gödel’s theorem on some mathematical truths. But if we can discover QM or general relativity or the incompleteness theorem in the first place, it’s not so crazy for us to figure out some9 of the decision/game theoretic truths of normative ethics, or more mundane particular applied ethical truths.

Further: our evolved instincts and cultural institutions are just not that hopeless here - not to be clear infallible, but also not hopelessly lost. Because if the content of normative ethics that follows from the above account is rule preference utilitarianism, then for a social species that evolved in small egalitarian bands of shifting coalitions debating and coming up with rules to bind them for mutual benefit, that had a sophisticated theory of mind - situations like the Jokerbreak and “if everyone here were to…” To go back to the original example, people have theories of folk physics that are highly imperfect and fallible, but good enough for daily navigation of the world and for providing a starting point for further refinement and investigation.10

My suspicion is that a non-social species, moral truths would be discoverable, but more of a feat akin to discovering quantum mechanics than to discovering how to knapp flint.

a note on agency and free will

I note that the picture of agency here is one that, if not reliant on perfect determinism, is at least compatible with it, and does rely on at least some amount of mechanistic determination: that is why a perfect copy of you would act just as you would and why you can expect reasoning processes similar to your own to arrive at similar results.

If we intuitively think of fully determinate, “clockwork” creatures as lacking moral agency or patiency, maybe this reflects the approach to be taken towards processes that can be fully modeled within our heads, and thus ruled out as parallel to our own. By contrast mechanistic but computationally irreducible processes (such as we are to ourselves) maintain the “mysteriousness” necessary for these kind of considerations. But that’s just somewhat unhinged speculation.

what moral realism gets us

I’m glad moral realism is true - so please count this section as points against my claims! It shouldn’t be too suspiciously convenient, but it is, and here are three reasons why.

I don’t think you need moral realism to be morally good, in ways small or big. Sympathetic concern and virtue signaling are more than enough to get you there and even among people who think about weird decision-theoretic concerns, sympathetic concern and virtue signaling surely plays the larger role.

I do think moral realism makes it easier to explain why impartial altruism is the sort of thing that commands some weight in virtue signaling in the first place. I think all the Darwinian and Marxian mechanisms present in history point towards brutally instrumental ideologies that would never converge on something as self-sacrificing as impartial altruism, but philosophers keep on coming up with it anyway, and it keeps on influencing (not dominating, but influencing) the worldly discourses, like the Holy Spirit invading history, the moon drawing ocean spray each evening to erode the marble face of Pharaoh on Mt. Rushmore.

Second - and this is a point I borrow from Alastair McIntyre - moral realism lets us employ moral discourse to persuade each other by reason, and not just as a form of manipulation.

Finally, moral realism as outlined above lets us have psychic powers to acausally influence other agents, working as a distributed hive mind communicating faster than the speed of light or even backwards through time. I admit this is kind of weird and maybe gives additional points against the theory, but you have to admit: isn’t it kind of cool?

I won’t address here whether there are non-natural facts. Plausibly mathematical truths are such a thing.

Perhaps you are a scientific antirealist and reject that all out of hand. However, if I can convince you that morality is as real as kinetic energy I consider my job mostly done.

And in the next sentence I’ll be invoking Vilfredo Pareto, who was even worse on this score!

I can think of two objections to naturalness here. The first is that this is a fact about agents acting on the basis of decision rules; if you think that such agents (human minds, and maybe others, perhaps angels, or God) are e.g. non-natural immortal souls, then these might not be natural facts - if so, revise this account as necessary. The second objection is that you don’t want to entertain hypotheticals or counterfactuals as natural facts; if so I think you’d also have to revise (though if you revise so hard you can’t entertain hypotheticals or counterfactuals at all, I strongly suspect you can’t engage in practical reason at all.)

It’s also not as good as if you actually had a time-consistent 80% discount rate - 19.4! - but that’s not your utility function, just the utility function you’re acting as if you have, precisely because doing so allows your actual utility function to be maximized.

Somehow philosophers do worse than chance on this. My concession to epistemic humility is to say “if you can explain the two-boxing position, please do so” because I’m really puzzled by this.

Don’t worry about sympathetic considerations for the Clown Prince of Crime; he’s actually indifferent to what his victims do or have happen to them and is just in it for the love of running philosophical thought experiments.

Yes, this is an argument for not just throwing feels-good punitive “justice” at Jeffrey Dahmer!

Of course, there might be others. These would still be reasons, not just reasons we had useful access to. For instance suppose you know you are going to die soon but not that shortly thereafter (regardless of what you do) all humanity will die in an unrelated event; this would be a reason (albeit a reason you could not act on the basis of) not to dedicate your will to charity and instead spend it now on orgies or at any event immediate-return charities, all else being equal.

The framing of the original question has biased me towards evpsychy explanations, but much of the same would apply to a psychological model based on in-lifetime experience, which involves a lot of interaction, observation, and negotiation with others.

I confess I am failing to follow a key step in the discussion. Why does having generally discoverable rational reasons to co-operate in certain circumstances mean moral realism is true? To me, it doesn’t count as moral if ‘co-operative’ actions are taken only because the expected value from a purely selfish perspective is positive. Ditto if the actions are chosen from fear of public punishment or from the selfish need to inspire others to take a course of action that is personally beneficial. Objective morality is true (it seems to me) only if there are reasons that mandate acting unselfishly in some circumstances.

https://open.substack.com/pub/burbanklabs/p/pareto-utilitarianism?r=2xwcs4&utm_medium=ios pretty similar!